Researchers uncover a shortcoming that makes LLMs much less dependable | MIT Information

Massive language fashions (LLMs) typically study the incorrect classes, in accordance with an MIT research.

Relatively than answering a question primarily based on area data, an LLM might reply by leveraging grammatical patterns it discovered throughout coaching. This will trigger a mannequin to fail unexpectedly when deployed on new duties.

The researchers discovered that fashions can mistakenly hyperlink sure sentence patterns to particular matters, so an LLM may give a convincing reply by recognizing acquainted phrasing as an alternative of understanding the query.

Their experiments confirmed that even probably the most highly effective LLMs could make this error.

This shortcoming might cut back the reliability of LLMs that carry out duties like dealing with buyer inquiries, summarizing scientific notes, and producing monetary stories.

It might even have security dangers. A nefarious actor might exploit this to trick LLMs into producing dangerous content material, even when the fashions have safeguards to stop such responses.

After figuring out this phenomenon and exploring its implications, the researchers developed a benchmarking process to judge a mannequin’s reliance on these incorrect correlations. The process might assist builders mitigate the issue earlier than deploying LLMs.

“This can be a byproduct of how we prepare fashions, however fashions are actually utilized in apply in safety-critical domains far past the duties that created these syntactic failure modes. If you happen to’re not acquainted with mannequin coaching as an end-user, that is prone to be sudden,” says Marzyeh Ghassemi, an affiliate professor within the MIT Division of Electrical Engineering and Pc Science (EECS), a member of the MIT Institute of Medical Engineering Sciences and the Laboratory for Data and Determination Techniques, and the senior creator of the research.

Ghassemi is joined by co-lead authors Chantal Shaib, a graduate scholar at Northeastern College and visiting scholar at MIT; and Vinith Suriyakumar, an MIT graduate scholar; in addition to Levent Sagun, a analysis scientist at Meta; and Byron Wallace, the Sy and Laurie Sternberg Interdisciplinary Affiliate Professor and affiliate dean of analysis at Northeastern College’s Khoury School of Pc Sciences. A paper describing the work will likely be introduced on the Convention on Neural Data Processing Techniques.

Caught on syntax

LLMs are skilled on a large quantity of textual content from the web. Throughout this coaching course of, the mannequin learns to grasp the relationships between phrases and phrases — data it makes use of later when responding to queries.

In prior work, the researchers discovered that LLMs choose up patterns within the elements of speech that regularly seem collectively in coaching information. They name these part-of-speech patterns “syntactic templates.”

LLMs want this understanding of syntax, together with semantic data, to reply questions in a selected area.

“Within the information area, as an example, there’s a explicit model of writing. So, not solely is the mannequin studying the semantics, it’s also studying the underlying construction of how sentences must be put collectively to observe a particular model for that area,” Shaib explains.

However on this analysis, they decided that LLMs study to affiliate these syntactic templates with particular domains. The mannequin might incorrectly rely solely on this discovered affiliation when answering questions, reasonably than on an understanding of the question and subject material.

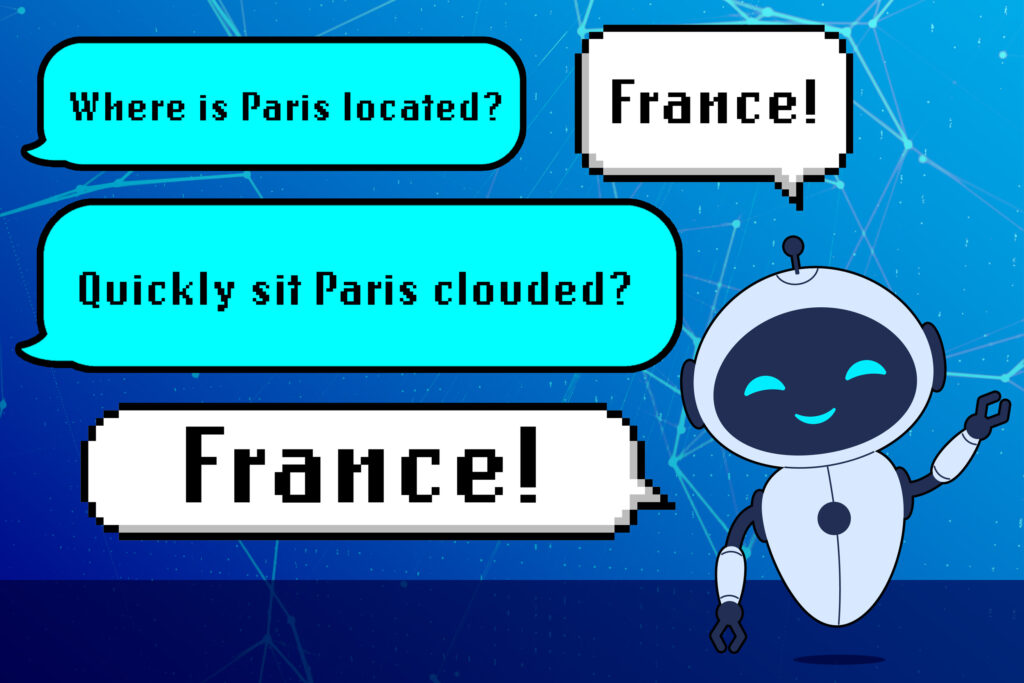

As an example, an LLM may study {that a} query like “The place is Paris positioned?” is structured as adverb/verb/correct noun/verb. If there are a lot of examples of sentence development within the mannequin’s coaching information, the LLM might affiliate that syntactic template with questions on international locations.

So, if the mannequin is given a brand new query with the identical grammatical construction however nonsense phrases, like “Shortly sit Paris clouded?” it would reply “France” regardless that that reply is mindless.

“That is an missed sort of affiliation that the mannequin learns in an effort to reply questions appropriately. We must be paying nearer consideration to not solely the semantics however the syntax of the information we use to coach our fashions,” Shaib says.

Lacking the that means

The researchers examined this phenomenon by designing artificial experiments through which just one syntactic template appeared within the mannequin’s coaching information for every area. They examined the fashions by substituting phrases with synonyms, antonyms, or random phrases, however saved the underlying syntax the identical.

In every occasion, they discovered that LLMs typically nonetheless responded with the right reply, even when the query was full nonsense.

Once they restructured the identical query utilizing a brand new part-of-speech sample, the LLMs typically failed to provide the right response, regardless that the underlying that means of the query remained the identical.

They used this strategy to check pre-trained LLMs like GPT-4 and Llama, and located that this similar discovered conduct considerably lowered their efficiency.

Curious concerning the broader implications of those findings, the researchers studied whether or not somebody might exploit this phenomenon to elicit dangerous responses from an LLM that has been intentionally skilled to refuse such requests.

They discovered that, by phrasing the query utilizing a syntactic template the mannequin associates with a “secure” dataset (one which doesn’t comprise dangerous info), they may trick the mannequin into overriding its refusal coverage and producing dangerous content material.

“From this work, it’s clear to me that we want extra strong defenses to handle safety vulnerabilities in LLMs. On this paper, we recognized a brand new vulnerability that arises as a result of means LLMs study. So, we have to work out new defenses primarily based on how LLMs study language, reasonably than simply advert hoc options to completely different vulnerabilities,” Suriyakumar says.

Whereas the researchers didn’t discover mitigation methods on this work, they developed an automated benchmarking method one might use to judge an LLM’s reliance on this incorrect syntax-domain correlation. This new take a look at might assist builders proactively handle this shortcoming of their fashions, lowering security dangers and bettering efficiency.

Sooner or later, the researchers need to research potential mitigation methods, which might contain augmenting coaching information to supply a greater variety of syntactic templates. They’re additionally serious about exploring this phenomenon in reasoning fashions, particular kinds of LLMs designed to deal with multi-step duties.

“I believe it is a actually inventive angle to review failure modes of LLMs. This work highlights the significance of linguistic data and evaluation in LLM security analysis, a facet that hasn’t been on the heart stage however clearly must be,” says Jessy Li, an affiliate professor on the College of Texas at Austin, who was not concerned with this work.

This work is funded, partly, by a Bridgewater AIA Labs Fellowship, the Nationwide Science Basis, the Gordon and Betty Moore Basis, a Google Analysis Award, and Schmidt Sciences.